Hey 👋,

Happy Tuesday!

I’ve paid for probably a hundred AI tools over the past two years and gone back to almost none of them. Not because they were bad. Most of them worked exactly as advertised. I just didn’t have a reason to open them a second time.

That’s a strange sentence to write, because it contradicts how we normally think about product quality. If something works, you keep using it. If you stop using it, something must have been wrong with it. But with AI tools, that logic breaks down. The product works fine. You just didn’t need it. You thought you did when you signed up, because the first experience was impressive enough to feel like need. But it wasn’t need. It was curiosity. And curiosity, once satisfied, doesn’t come back.

I don’t think most people realize how common this is, because nobody thinks of themselves as the problem. You don’t tell yourself “I signed up for that AI writing tool out of curiosity.” You tell yourself “I should use that more” and then you never do, and eventually you forget to cancel, or you cancel without really thinking about why. The product didn’t fail you. You just never had a recurring reason to use it.

Now multiply that by millions of users, across thousands of AI startups, during the largest venture capital wave in history, and you start to see the problem.

Today’s Open Scout is brought to you by… SurveyMonkey

Stop guessing, start listening continuously.

In a world that’s constantly changing, the best decisions come from data that’s current, connected, and grounded in real feedback from real people.

Built for teams with “full plates” (and zero time), SurveyMonkey programs make it simple to transition from the one-off survey to a continuous feedback loop.

Instead of starting with a blank page, you get a built-in roadmap informed by 25+ years of survey expertise.

Programs suggest how often surveys should be sent and let you see how sentiment evolves over time, so your metrics finally have context.

No more stale snapshots—run feedback programs that power smarter decisions.

The broken thermometer

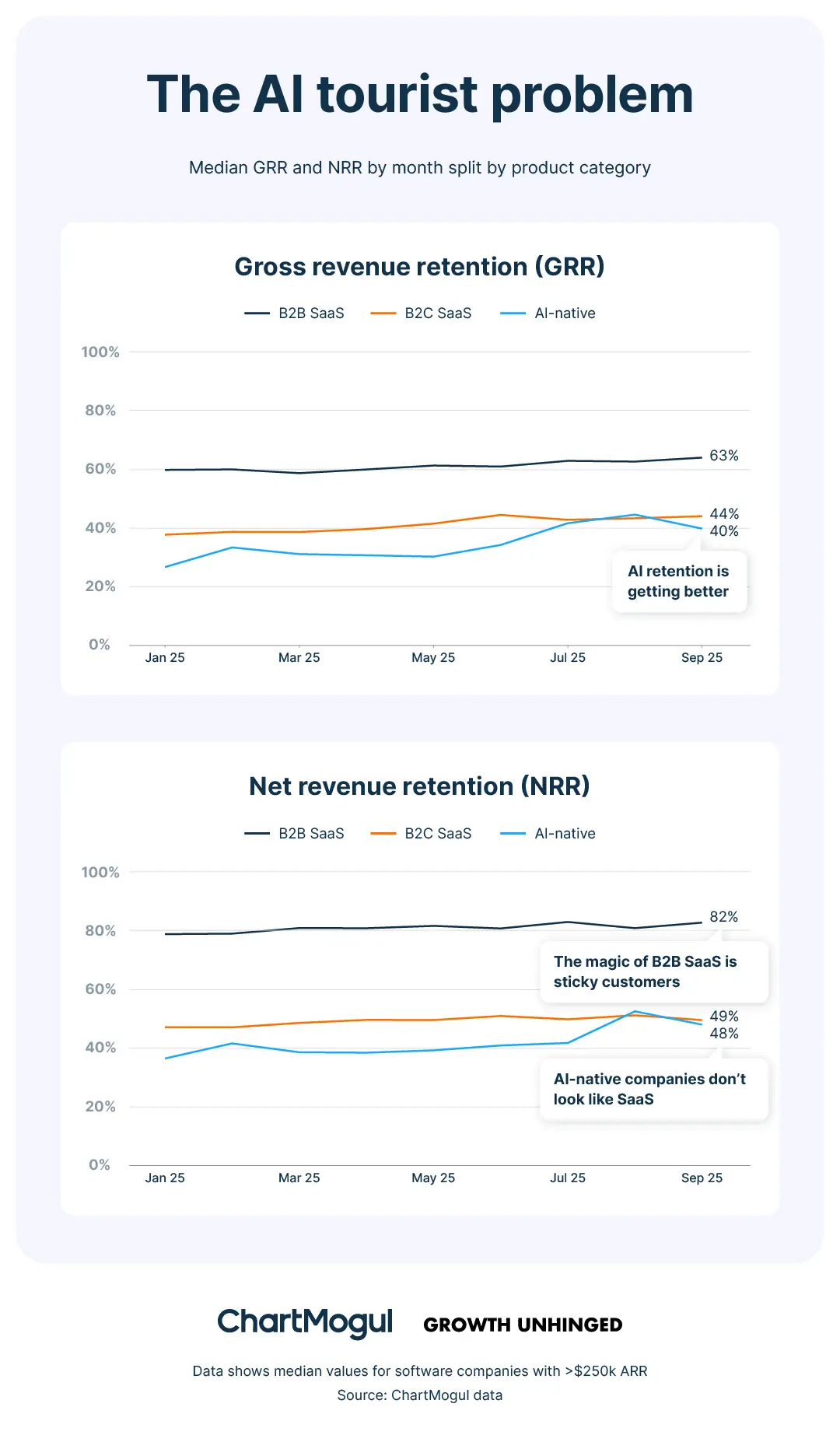

The way we evaluate product-market fit was built for a world where paying meant needing. In SaaS, if someone gives you money every month, they have a reason to. Their data lives in the product. Their team is trained on it. Nobody pays for Salesforce out of curiosity. Payment is evidence of need, and every metric we have, ARR, MRR, net revenue retention, assumes this. AI breaks that assumption. In AI, paying can just mean curious. And no standard metric separates the two.

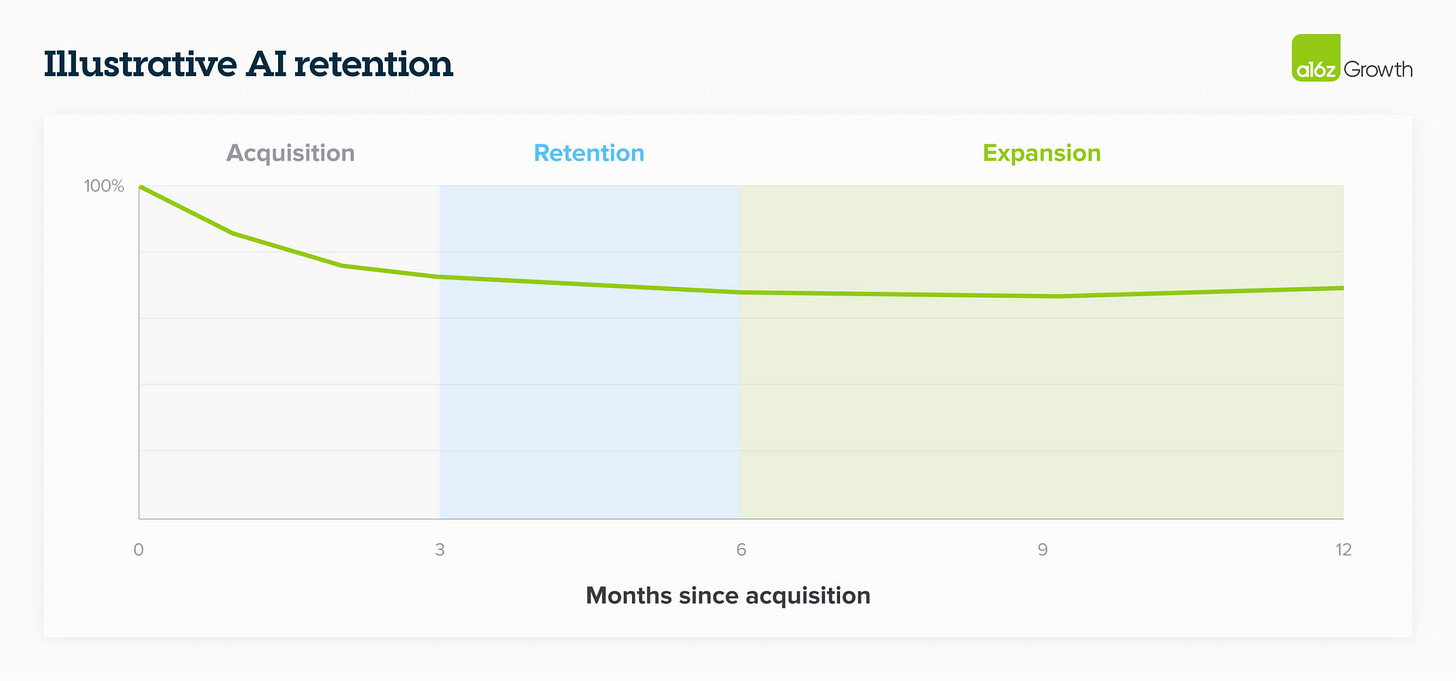

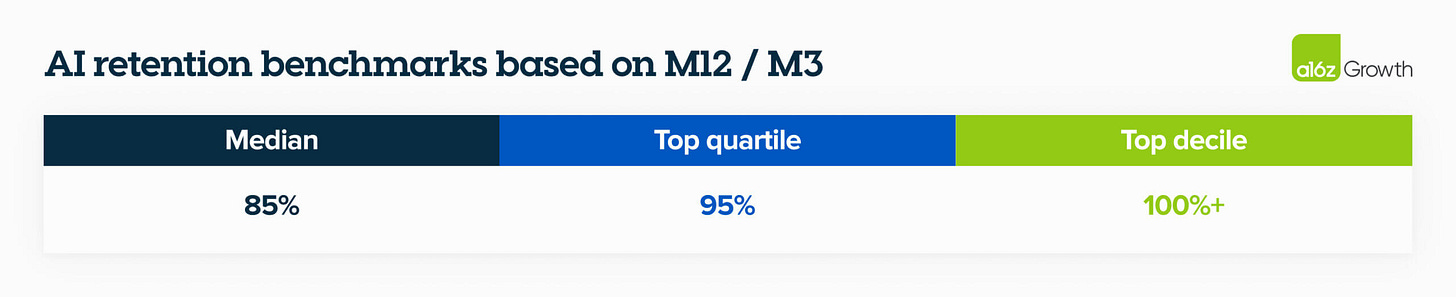

A16z figured this out and built a framework around it, but most people are reading their work wrong. Everyone focuses on the headline finding: AI companies lose a huge chunk of users in the first three months as “AI tourists” churn out. That part gets quoted everywhere. The more important part is what a16z recommends doing about it: throw out the first three months entirely. Rebase to M3. Your real customer base is whoever stuck around after the tourists left. The metric that matters is M12/M3, what those committed users do over a full year.

When you measure this way, the picture changes. The best AI companies show retention curves that actually smile, curving upward as committed users expand their usage. A16z’s conclusion, which the doom narrative ignores: “Could we see 150% NDR at scale? For the first time, the answer might be yes.”

So the good companies are fine. The problem is the hundreds that aren’t, where M3 comes and the curve never flattens, because there weren’t enough real users underneath the tourists to sustain a business. For those companies, the first three months of growth weren’t signal. They were noise. And the capital decisions made during that noise are already locked in.

Why AI is different

Curiosity-driven spending has always existed. But AI makes it qualitatively worse for a reason that’s easy to miss: the normal correction mechanism doesn’t work.

With most previous technology hype cycles, there was a built-in save. The product overpromised, and reality caught up fast. 3D TVs were dim and required annoying glasses. Google Glass made you look weird. The metaverse was empty. In each case, people figured out within weeks that the product wasn’t what they hoped. That fast disappointment was actually protective. It kept the curiosity cycle short and the capital damage contained.

AI products don’t overpromise. That’s the trap. ChatGPT really does write a decent email. Google/Nano Banana really does generate a striking image. The first experience delivers on its claim. So the thing that normally snaps people out of curiosity purchases, the moment where reality falls short of the demo, doesn’t happen. Or rather it happens months later, in a form that’s much harder to notice: the slow realization that you haven’t opened the product in three weeks. Not because it stopped working. Because you never had a daily reason to use it.

You can see this playing out in real time. When Google launched its Nano Banana image model, 200 million images were generated and 10 million new users joined Gemini in the first week. Two hundred million banana images. That is not latent demand for AI banana art. That is pure curiosity, the brain’s reward system firing on novelty, at a scale that registers as a growth event on Google’s dashboard. Some of those 10 million users will stick around and find real use cases for Gemini. Most won’t. But for one week, they looked exactly like real users.

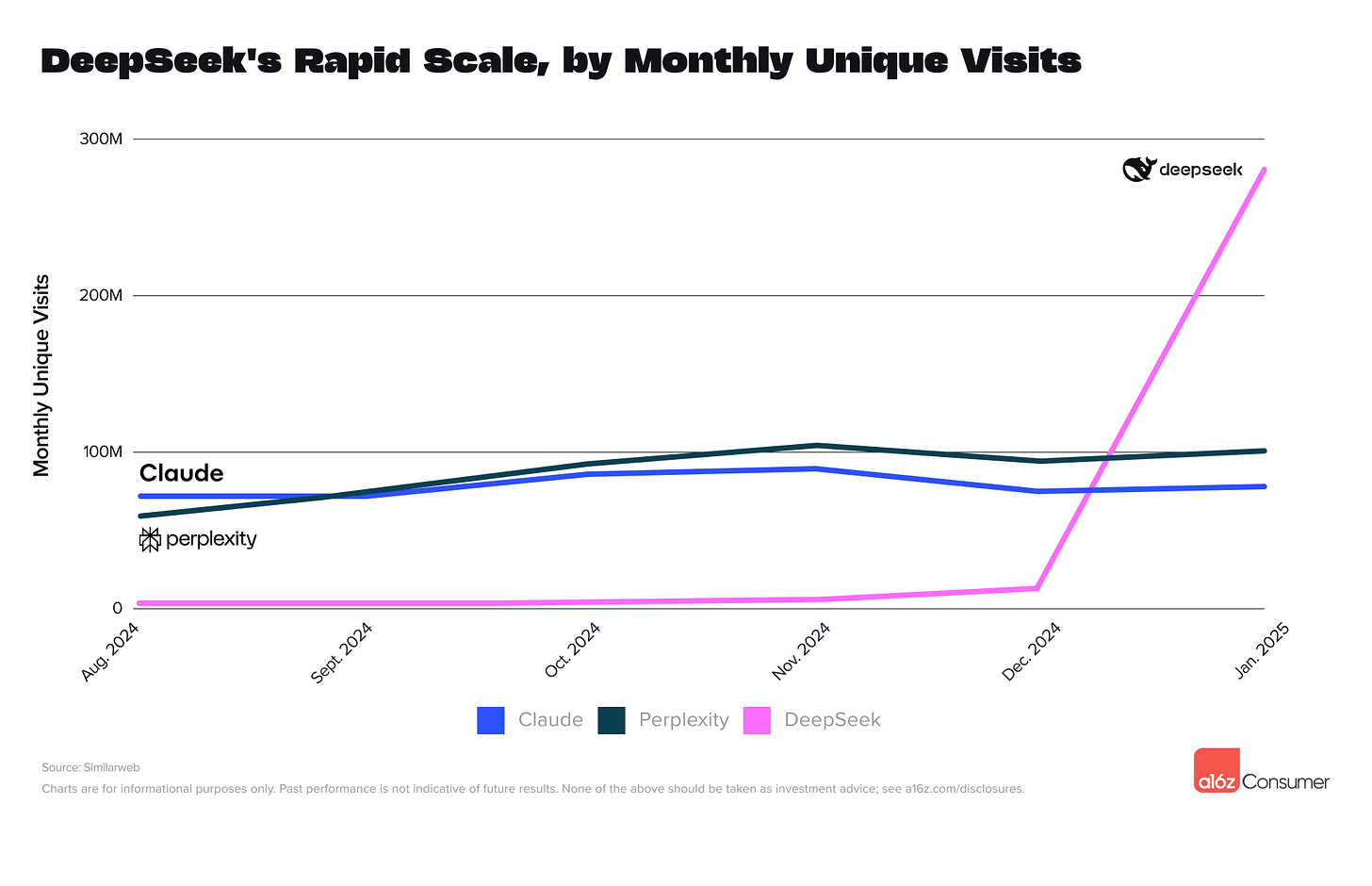

DeepSeek had a similar arc, though the reasons are muddier. It launched in January 2025 and ranked second globally in ten days. By mid-2025, traffic had dropped over 40%. Some of that is probably geopolitics and model quality rather than pure curiosity. But the shape of the curve, explosive arrival followed by steep decline, is the curiosity signature regardless of what’s causing it.

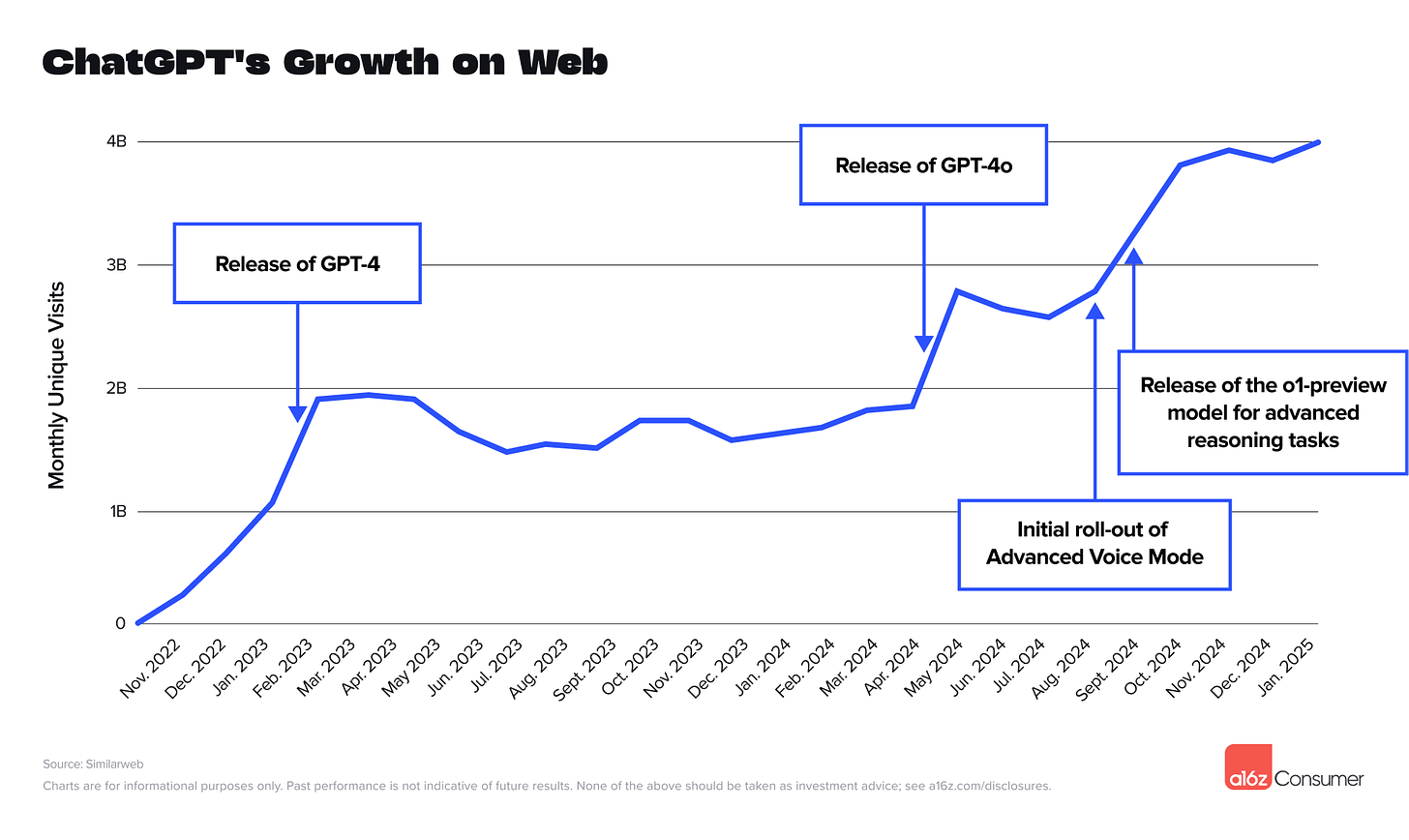

Even ChatGPT went through this. A16z noted that ChatGPT’s growth plateaued for nine months after launch because “many consumers found it intriguing, but lacked compelling daily use cases.” Nine months. The biggest AI product in the world, built by the most well-funded AI company, needed nine months to cross from curiosity to utility. It did cross, through new models and features that pulled people into real workflows. But it crossed with billions of dollars of runway behind it. A startup with 18 months of funding and a flat growth curve at month six doesn’t have that luxury.

This is where I think the problem gets really specific, and worth being precise about. The big platforms, ChatGPT, Claude, Gemini, have crossed from novelty to utility. Hundreds of millions of people use them for real work. They are not curiosity-driven products. The problem lives in the thousands of startups built on top of these platforms.

And the reason it’s so insidious is something I think of as borrowed wow. When a user tries one of these startups for the first time, the wow they feel is real. But it’s not the startup’s wow. It’s GPT-5’s wow, or Claude’s wow, or Gemini’s wow. The foundation model does the impressive thing. The startup provides a nicer interface, a vertical framing, a slightly more convenient workflow. The user gets credit-card-impressed and signs up. But the value they’re responding to doesn’t belong to the startup. It belongs to the model.

This matters because borrowed wow has no staying power. The user wasn’t responding to anything the startup built. They were responding to the capability of the model, which felt new and exciting when encountered through a fresh interface. Once the novelty of that particular interface fades, there’s nothing startup-specific holding them. They don’t churn because something better came along. They churn because the curiosity that brought them in was never about the startup in the first place.

Appfigures found that “general assistants,” what they candidly call “thin wrappers,” account for 40% of all consumer spending on the top 1,000 AI apps. Forty percent of the money going to products that are, roughly, someone else’s model with a different coat of paint. That’s a lot of borrowed wow sitting in a lot of revenue numbers.

Bessemer’s PMF playbook for AI founders says it directly: “Novelty isn’t the same as value. If users don’t integrate your product into their daily workflows, you don’t have PMF yet.” That’s the whole problem in two sentences. The wow gets you the signup. Only workflow integration gets you the retention.

Foundation Capital described the production version of this problem well: you can get to 80% of a product with 20% of the effort, which is enough to close a pilot and impress an investor. But real usage demands 99% reliability, and that last stretch takes 100x more work. Most AI startups live in the gap between 80% and 99%. Impressive enough to attract curiosity. Not reliable enough to become a habit.

The loop

Curiosity-driven revenue doesn’t just fool founders. It fools the entire system around them.

Tourists sign up. Growth spikes. VCs see the spike and write a check. The company raises at a valuation that assumes the curve continues. The fundraise generates press. Press attracts more tourists. Everyone in the loop has a reason to believe the revenue is real, because admitting otherwise means the valuation is wrong.

From inside the loop, it feels like winning. The numbers are going up. The investors are excited. The team is growing. The only people who might notice something is off are the ones staring at cohort charts six months out, and in most early-stage companies nobody is doing that, because there are a hundred more urgent things to do and the top-line numbers look great.

In 2025, AI startups captured over half of all global VC, roughly $258 billion. 60% went to rounds of $100 million or more. The pricing data from ChartMogul tells you what’s underneath: AI products under $50 a month had 23% gross retention. Above $250: 70%. The cheap products, the ones with the most explosive growth, attract the most tourists and raise the most capital. The expensive products, with slower growth but real retention, get less attention. The system selects for the wrong thing.

Sora is what happens when the loop reaches its conclusion. OpenAI’s video tool generated enormous excitement, produced genuinely remarkable videos, attracted a billion-dollar Disney partnership, and shut down in April 2026 because almost nobody had a daily reason to use it. The product worked. The demand was curiosity. And curiosity doesn’t sustain a business, even when it’s backed by OpenAI’s brand and Disney’s money.

SimpleClosure’s 2025 data shows this playing out broadly. Series A failures jumped from 6% to 14% of all closures. Gartner says the average enterprise cut its AI vendor count by 40% in six months. The experimentation phase is ending. What replaces it: prove you’re essential or get cut.

Living there vs. visiting

The companies that survive the curiosity wave share a trait that has nothing to do with having a better model. They made their product the place where work happens.

Cursor is the clearest example. It got to roughly $500 million ARR not by being the best AI coding assistant but by being the code editor. Not a plugin inside your editor. The editor itself. The thing you open when you start working in the morning. If you switch away from Cursor, you’re not just losing AI suggestions. You’re losing your keybindings, your extensions, your project configurations, your muscle memory. That’s a switching cost that has nothing to do with AI quality and everything to do with how painful it is to rearrange how you work.

That’s the difference between a product people use and a product people depend on. Usage is visiting. Dependency is living there. And the gap between them is the gap between curiosity-driven revenue and the real thing.

Perplexity’s CEO told Bessemer they track whether users who do one query come back and do another: “Initially 80% of users that do one query do another. We wanted to get this to 100% so two queries can become five, and usage becomes a habit.” That’s the right question. Not how many showed up. How many came back without being reminded, and came back again, and again, until using the product stopped being a decision and became a reflex.

The test

There’s a test I find useful for thinking about this. If the product disappeared tomorrow, would the user notice?

Not “would they be mildly annoyed.” Would they notice? Would it disrupt their morning? Would they have to scramble to find a replacement? Would they complain to a colleague?

If the answer is no, the revenue is curiosity-driven. The user is paying but not depending. And revenue without dependency is a loan against future churn.

Five questions worth asking yourself honestly:

What percentage of your users who signed up three months ago are still active today? Not paying. Active.

Do your users come back to start new projects, or did they finish one thing and disappear? That’s Bessemer’s “second-bite” test. It’s the single cleanest signal separating tourists from residents.

How did your users find you? If the answer is “they saw a viral demo” or “they read a launch post,” that’s curiosity-acquisition. If the answer is “a colleague told them it saved ten hours a week,” that’s need-acquisition. The channel tells you what kind of demand you’re capturing.

If you doubled your price tomorrow, would users complain or cancel? Complaining is dependency. Cancelling is indifference. The whole difference is in which one happens.

Is the wow in your product yours, or is it the foundation model’s? If you swapped the model underneath for a competitor’s API, would the user experience change meaningfully? If not, your value layer is thinner than you think.

If you got through all five and felt uncomfortable, that discomfort is useful. It’s the feeling of seeing your revenue clearly, possibly for the first time.

The AI wave will produce some of the most valuable companies ever built. The technology is real and the companies that become essential will compound for decades. But most of the companies riding the wave haven’t crossed that line yet. They have revenue that passes every standard test for product-market fit. And it isn’t product-market fit. It’s curiosity. And the difference between those two things is the most expensive misdiagnosis in startups right now.

Thanks for reading.

If you enjoyed this issue, send it to a friend—it helps more than you think.

Back in your inbox Thursday,